Composing with Algorithms

A talk between Karlheinz Essl and Magdalena HalayKlosterneuburg (A) / Edinburgh (UK) via Skype

30th Mar, 2015

Karlheinz Essl [laughs] I'm using algorithms as a tool to extend my imagination of music. Algorithms or computers can help me to get a better idea or a better image of the music I want to compose, so to speak.

MH: How do you feel it extends the imagination of music and gives a better image?

KHE: Composing for me doesn't mean that I'm writing the music that's in my head. I'm trying to find some methods or situations where I can extend my imagination. Using algorithms in compositions let me work on quite a different level by creating a framework that can act as an outline for a compositional or sonic idea. Basically, it's not a theory I want to prove; it's more an asset of tools to help me imagine music that's not already in my ears. Maybe it's more a sort of blurred image that I want to capture.

MH: What do you feel algorithms can offer us musically?

KHE: Well, the thing is the algorithms are not something you can buy in the store [laughs]. At least for me, it's a formalisation of personal artistic ideas. Of course I am relating to ideas that other composers had in their minds, but basically I'm trying to invent my algorithms mostly myself.

MH: When you say you're inventing the algorithms yourself, do you mean you're inventing the actual algorithms yourself? Or the structure of the algorithms?

KHE: I'm not inventing an algorithm per se.... I try to outline the compositional structure in terms of algorithms, or a set of rules, and then - by experimenting - I can see whether it would fulfill my wildest dreams, so to speak. If it doesn't, I rework it until I come to a point where everything is getting in the direction that I was thinking about it. - BTW, do you compose?

MH: Yeah, I do compose and I've tried composing algorithmically. I do various compositions like this, and I have recently been exploring the use of electronics within my compositions.

KHE: When I started composing I was usin gpaper and pencil and I'm still doing this, but I'm extending these methods by using these algorithmical ideas.

MH: What makes an algorithmic composition sound algorithmic to you?

KHE: Oh, it's not the point that it sounds algorithmic! Maybe this is a big misunderstanding [laughs]. The point is that it must not sound "algorithmic", you know? [Laughs]

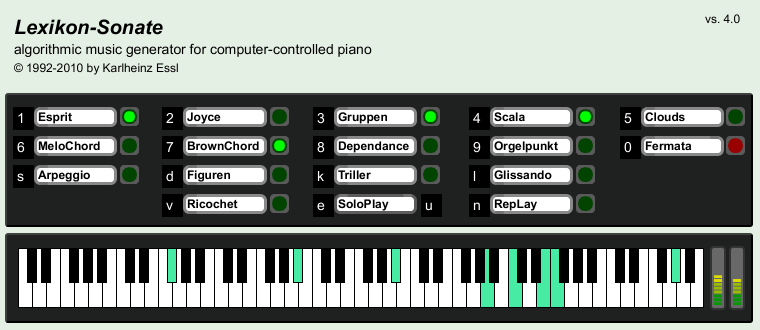

User interface of Lexikon-Sonate 4.0

MH: What? People wouldn't be able to decide whether it was algorithmically generated piece?

KHE: Yes, I think so, well this is my experience. This piece has been around since almost 25 years, in which time the piece has been performed a lot and many people have experimented with it. This is the general feedback I have received from my audience, so to speak. This is something that I really wanted – I made a program capable of creating an infinite composition which not only has the compositional aspect in it, but also all the interpretation, the articulations - everything that has to do with performance is also part of the algorithms in this piece. So the point is that a composition composed algorithmically should not sound algorithmic.

MH: Would you ever say that there are pieces that sound algorithmic? Could you define what an algorithm sounds like, if you've heard it?

KHE: Well, an algorithm has no sound. The algorithm is just an organisation of ideas and it depends on the ideas that you want. I can't make a global statement about the sound of algorithmic composition per se. There are so many different aesthetics... But what interests you in algorithmic composition?

MH: Well, I think the process and the musical output that you get is interesting... the fact that you're never fully sure of the outcome and how to yet deal with the musical material compositionally and intuitively to create something that is new sounding.

MH: That does seem interesting. Is this how you compose algorithmically then? You like to use ranges from order and chaos?

KHE: Yes, order is very important... because I think there's not really a distinct contradiction. It's an old idea of serialism that there is a sort of transition between order and chaos. This is the interesting aspect of algorithmic composition as I understand it.

MH: Mainly the transitions between order and chaos?

KHE: Yes, and to play with this transition.

MH: And the transition would be developed algorithmically to transition between the two sections of chaos and order?

MH: Yes, I have.

KHE: With the MIDI controller you can mix the structure generators dynamically. This is a completely different way of playing the Lexikon-Sonate than hitting just one key on the computer because you now have access to the different structure generators physically and you - so to speak - can play and shape different musical characteristics at will. When I play the piece I'm always doing it like this. Have you seen any videos of the performance?

MH: Yes, I've seen your concert during the symposium 50 Years of Electroacoustic and Computer Music Education.

Karlheinz Essl performing Lexikon-Sonate on a Steinway, equipped with a transducer

29 Nov 2014, The Hague (Royal Conservatory of Music)

KHE: Yes, and this was interesting because I was standing in front of a grand piano and the piano sounds were coming out of the piano although it wasn't a player piano, it was a normal Steinway. But I put a special vibration speaker onto the resonance board of the Steinway where I transferred the piano samples from my Lexikon-Sonate, so to speak, on the sound board of the piano, using all the acoustic properties of this instrument. This was especially interesting because I was not playing the piano.

MH: That is interesting. So it wasn't any mechanical device, it was essentially your audio output that was playing the piano?

KHE: Yes, the thing is I had no player piano in this concert so I decided to use this special version of Lexikon-Sonate with piano samples from Steinway. Using these samples with this special loudspeaker, which was placed on the resonance board of the piano, the audio output from the program was directly sending the acoustics sounds of the sampled piano on the resonance board. This started to get the whole instrument into reverberation and with the sustain pedal I could create natural resonances, and all this stuff.

MH: That's really clever. I hadn't really thought about how that worked or was made. It's really good.

KHE: It was a bit of a fake in a way... because people thought I was having a player piano but the key didn't move, yeah? Anyway, it sounded like a real piano!

MH: Yes it did.

KHE: I was bringing up this topic because I wanted to emphasise the importance to have a physical control and intuitive control of the performance. So, for me, the algorithm is also interesting if it's only doing something by itself and working like a creative robot, but also that you have control on a meta-level.

MH: So you mean... it's useful to use algorithms to compose intuitively during improvisation?

KHE: When performing a piece I make a sort of interpretation of the Big Thing, and this is done mostly intuitively – in a way, it's an improvisation.

MH: Yeah, that's true. So would you say that the output from Lexikon-Sonate could have been composed without the aid of a computer?

KHE: No, impossible! Without the computer program it wouldn't. Because the output of this piece cannot be played by a human being, it's impossible. There are people who have made transcriptions by connecting the MIDI output to a notation program and what comes out looks like a very complex score of Brian Ferneyhough, so it would need maybe three of four players performing together which absolutely makes no sense [laughs]. Because the idea of the piece is not to make a reproduction of the same thing, but that you always get new results with lots of surprises.

KHE: Complexity is not a goal in itself. For me, the aim is to create something that helps me to create a richness, a variety and something that has a strong expression. It is not the purpose to create something very complex, it's more the idea to create something that is full of expression, and this is why I'm using “complex algorithms”, because they are related to a lot of things. There are interdependencies that are very important because lots of natural phenomenon can be modelled with algorithms using this idea of recursion and interdependencies.

MH: Yeah, so, is there a specific algorithm that has musical significance in Lexikon-Sonate? Have you ever worked with algorithms that have extra musical significance? For example, working with numerological or biological systems?

KHE: No, no, no, no, no! I am not using these things. The algorithms that I use in Lexikon-Sonate are really tailored towards the idea of creating a piano piece. Lexikon-Sonate is a piano piece – it has to do with this very instrument, its repertory and history. So it relates more to Bach and Beethoven than it does to DNA algorithms, yeah?

If you look at the Lexikon-Sonate, there are so called structure generators, such as ESPRIT, and JOYCE, and so on, and each one is a sort of model that creates a musical output. From the very beginning, it was conceived as a musical idea and not as an intellectual or scientific theory.

Yes, I understand what you mean: sometimes algorithms are used to make a sonification of mathematical ideas, for example, or something like that. I know that people are using music or sound as a means of sonification of abstract structures, but this is not the case in my pieces, they are always focused on the musical output.

MH: That's what I expected. It's just some pieces do this so I wondered if Lexikon-Sonate also did. So would you say the intuitive compositional process involved in this piece was just from the performer?

KHE: The intuition comes from the performance. When I was working on the Lexikon-Sonate, I was designing the different components of the piece, like the structure generator ESPRIT that would generate expressive melodies. But what is an "expressivo" melody? There's a lot of music history in it. Have you heard about the "infinite melody" [Unendliche Melodie] of Richard Wagner in your studies? If you listen to Tristan und Isolde, his arias could go on forever because they are not controlled by a certain rhythmical or meta-structure. It is a sort of melody that goes forever and changes its appearance during the piece. This idea of infinite espressive melody, how it was composed by Wagner in his later works and middle period can go back to pieces of Johann Sebastian Bach for example [like Variation XXV of the Goldberg Variations], but this would go too far. A compositional idea of creating a melody with no beginning and no end, a process that is always going on, and during this process, the melody is transforming itself, yeah? This is something that was fascinating me in Classical music and I tried to make a sort of reverse engineering.

Structure generator ESPRIT

MH: Lexikon-Sonate was emerging?

KHE: Yes, as a piece. In the beginning it was just an experimental process. It's more a less a work-in-progress, but nowadays it is finished... There's no need to make any additions, for me, at least. There was a development for 10 years. Since then, it's more or less the piece with maybe some little improvements in the algorithms, but there is not much change much since then.

KHE: [Laughs] This is very hard to explain. What exactly is espressivo? Maybe it's a personal idea. You know, it's hard for me to define what espressivo is. It's something to do with a talkative quality; so it's speaking, it's touching, and it's er... it's not... it's not boring. The opposite of espressivo is repetition, for example - if something like a loop. A loop is not really expressive, no? Because it's completely foreseeable what will happen and it's not very interesting; however, something that is changing and creating climaxes, something that intensifies from calmness, this, for me, has expressive character. It's also very difficult to explain in a foreign language [laughs] I mean it's already difficult to explain it even in German. Let's keep it like this. It's a personal fantasy in a dream to test the label "espressivo"...

MH: Has it more to do with the generation of material and unpredictable or surprising music that creates more interest and more espressive musical outputs?

MH: I was looking at Brownian Theory, used in Lexikon-Sonate, which is a stochastic process that represents random processes in nature. Were you representing nature in your piece at all?

KHE: Yes, that's a good point! One of the generators in my RTC-lib [a collection of Max patches for algorithmic composition in real time], Brownian Melodies, uses Brownian, yeah? And this is something I discovered when I was trying to create random melodies. If you use just an ordinary random, it would sound quite uninteresting. But if the random has some restrictions, then it feels more as it was sung or as it was played on the instrument, because on the instrument you cannot leap over three octaves easily, nah? In order to get the espressive quality, the intervals must be smaller. So, having restrictions creates a sort of coherence that the melody wouldn't just be a random disposition of some notes at the piano or another instrument, but that it has a sort of shape that it creates. This is maybe also to do with the idea of espressivity that I had in mind. And here, the concept of the Brownian motion came very handy to test it.

MH: That is very interesting. I noticed that you use Brownian motion a lot in your piece. Would you say that it is a main random generator in your piece?

MH: So they're all being used equally? You wouldn't say there's a main algorithm that's used a lot?

KHE: No, no, no, no! The algorithms are tools and I'm using them with a musical or compositional idea. I'm not using them as just to play with them, yeah? So I always have something in mind and I'm trying to achieve this and I'm using the tools to experiment and try to achieve this.

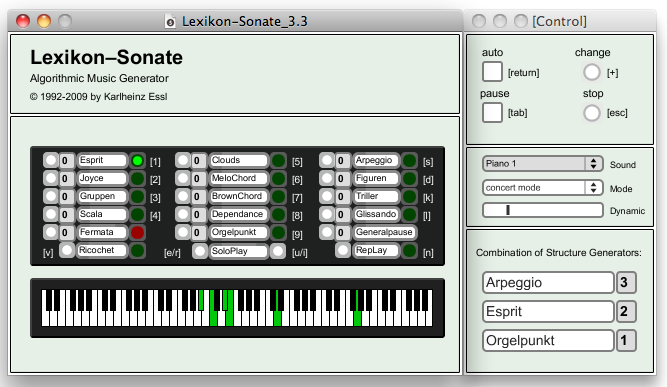

User interface of Lexikon-Sonate 3.3

click-able map: clicking on one of the boxes (like "Esprit") will supply you

with more information of this structure generator and a sound example.

KHE: Ok, well, there is a sort of a bucket chain, if you want [laughs]. You have three slots and you start with one module which is played with a certain “weight” – this is a number that applies to its slot. And this gives the probability of length or of density versus the pause.

And then if you send another randomly chosen module into the bucket chain, the first module goes to the second slot, with a different weight, and this is combined now to the first slot and the new element. So now you have two modules playing together, like making a duo, but the thing is the first module is still there but on a different position, yeah? And then another module is chosen randomly. So the new module goes into the first slot, the old one goes into the second slot, and the original module that was used in the beginning, goes into the third. Now we have a trio! Something is staying constant and something is changing because there is a new module coming and the other modules are emptied from the bucket chain, so to speak. All this creates a formal coherence. Due to the combination of the modules, the music is not changing abruptly from one module or situation to the other, but there is also a sort of structural transition.

This is one method to perform the piece with a sort of conductor who's giving the cues and combines the modules. This can be achieved either with the automatic mode by an internal conductor triggered by an internal cue generator, or manually, by pressing the "change" button. Sometimes I compare it with the situation when you are driving with the car through a landscape. And at some point you can stop the car and look at the landscape as long as you wish. When you drive further, the landscape changes. But this new landscape isn't a completely new because there is a process of shifting from one scene to the next. Something's changing, but some aspects remain constant. The same mountain that you saw before maybe on the left side and is a little further away and it's combined now with a river that you see for the first time. This is a sort of image to understand this Lexikon-Sonate combination issue.

MH: How is that achieved algorithmically? What algorithms are achieving that? Or is it a statistical thing?

KHE: No, it's a random selection with a repetition check. It's not possible for a module to re-occur unless all the others have been used. This is serial principle. This guarantees as well that you would never have a combination of two modules of the same type.

KHE: This is achieved by my performance where I could have the full band width at my fingertips: from complete silence to crazy density. All this was done on purpose, just by my interaction with the computer program. And, very importantly, I use a foot pedal for controlling the general dynamic, which maybe you didn't see on the video. It's like a master fader on a mixer if you want.

And this is also very important and an expressive means that you can control the dynamic, the level, the global level, so to speak. Everybody who plays piano does this naturally without thinking about it, and then a lot of these things come together to make forte, crescendo, decrescendo, and other things, and this is something that I do with the pedal.

MH: That is interesting that there are aspects of intuitive composition portrayed through performance. Can it change in density just when it is played by the computer?

KHE: Yeah of course, because I can decide with my MIDI controller the modules I combine. I'm not using this combination "bucket chain" that I was speaking of before! Because I can control all structure generator with my MIDI controller, and mix them together as I wish. I can have up to 8 at the same time with different dynamic proportions to each other, and then I have my main volume control at my foot to form the overall shape of the dynamic.

KHE: To the world of music? What it brought to the world of music? I don't know. You must tell me [laughs]. I have no idea. What do you think?

MH: What do I think? [Pauses] I think the complexity of the piece, and the fact that a computer is able to play a piano and generate an infinite piece, is an interesting concept. I think it's made people more open minded and more creative when thinking about what can be algorithmically generated, and so has perhaps helped develop algorithmically aided compositions, creatively speaking. What do you think?

KHE: Well, I know that a lot of people are using it for different purposes, but, well, it's also an interesting tool for experimentation and I like to play it in live performances still. But you know this is not my whole life [laughs].

MH: Yeah, of course [laughs].

KHE: It's just a tiny little piece of what I've done. So, Magdalena, any more questions?

MH: No, they're all my questions at the moment, thanks.

Magdalena Halay is an Edinburgh based composer and violinist who has just completed her Bachelor of Music with Honours degree at The University of Edinburgh (2015). She has studied composition under composers including Peter Nelson, Michael Edwards, Stuart Macrae and Yati Durant. Magdalena composes for a range of instruments for different acoustic and electronic works, with a recent focus on experimental violin music. Her electronic and acoustic compositional interests have developed by experimenting with the incorporation of live electronics within her compositions. Magdalena's works have been performed on different occasions, including at The Tovey Memorial Prize Concert at The Reid Concert Hall in Edinburgh, and by members of the Edinburgh Quartet during a Composition workshop.

| Home | Works | Sounds | Bibliography | Concerts |

Updated: 2 Jan 2020